Migrating to your own WordPress.org account - things that will suck and how to avoid them

I’ve recently been in the process of migrating my blog off of WordPress.com hosting to my own WordPress.org account. I tried a few things, a number of which did not work well, and I hope to help you avoid them if you try the same thing too.

Continue Reading...Is your SCCM SQL stuck in Evaluation mode? Don't despair!

Have you ever wondered what happens when you install SCCM 2012 or 2007 on top of SQL and choose ‘Evaluation mode’, then forget to enter the SQL key?

Continue Reading...Automatically Delete old IIS logs w/ PowerShell

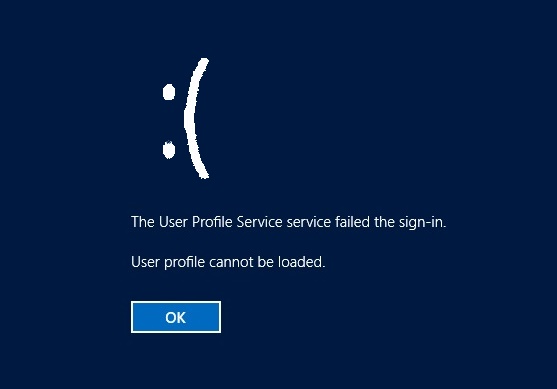

I’ve seen one too many servers hobbled by a run-away IIS installation. By default, if IIS is enabled and allowed to store its log files, it’s only a matter of time before it consumes every scrap of space on the C:\, leaving no room even for a user profile to be created! When this happens, no one can log onto the machine who hasn’t already stored their profile before. It’s sad. It’s so sad, it even has a frowny face on Server 2012 systems.

Continue Reading...Automatically move old photos out of DropBox with PowerShell to free up space

I love dropbox, we all love Dropbox, it even comes preinstalled on our phones!

Continue Reading...Impractical One-liner Challenge #2

It’s back, your favorite Friday PowerShell diversion!

Continue Reading...SCCM 2012 Log File Quick Reference Chart

Continue Reading...